EthEl - A Principled Ethical Eldercare Robot

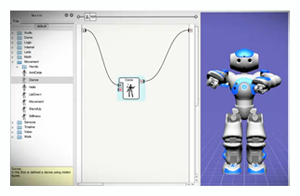

Researchers Michael Anderson

from the University of Hartford and Susan Leigh Anderson from the

University of Connecticut have developed an approach to computing

ethics that entails the discovery of ethical principles through

machine learning and the incorporation of these principles into a

system’s decision procedure. They've programmed their system into the

robot NAO, manufactured

by Aldebaran Robotics. It is the first robot to have

been programmed with an ethical principle.

Researchers Michael Anderson

from the University of Hartford and Susan Leigh Anderson from the

University of Connecticut have developed an approach to computing

ethics that entails the discovery of ethical principles through

machine learning and the incorporation of these principles into a

system’s decision procedure. They've programmed their system into the

robot NAO, manufactured

by Aldebaran Robotics. It is the first robot to have

been programmed with an ethical principle.

Imagine being a resident in an assisted-living facility — a setting where robots will probably become commonplace soon. It is almost 11 o’clock one morning, and you ask the robot assistant in the dayroom for the remote so you can turn on the TV and watch The View. But another resident also wants the remote because she wants to watch The Price Is Right. The robot decides to hand the remote to her. At first, you are upset. But the decision, the robot explains, was fair because you got to watch your favorite morning show the day before. This anecdote is an example of an ordinary act of ethical decision making, but for a machine, it is a surprisingly tough feat to pull off.

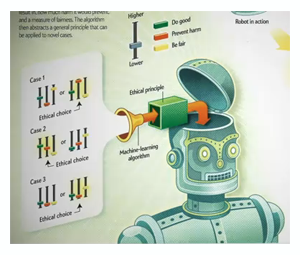

Robots that make autonomous decisions, such as those

being designed to assist the elderly, may face ethical dilemmas even

in seemingly everyday situations. One way to ensure ethical behavior

in robots that interact with humans is to program general ethical

principles into them and let them use those principles to make

decisions on a case-by-case basis. Artificial Intelligence techniques

can produce the principles themselves by abstracting them from

specific cases of ethically acceptable behavior using logic. The

researchers have followed this approach and for the first time

programmed a robot to act based on an ethical principle.

Robots that make autonomous decisions, such as those

being designed to assist the elderly, may face ethical dilemmas even

in seemingly everyday situations. One way to ensure ethical behavior

in robots that interact with humans is to program general ethical

principles into them and let them use those principles to make

decisions on a case-by-case basis. Artificial Intelligence techniques

can produce the principles themselves by abstracting them from

specific cases of ethically acceptable behavior using logic. The

researchers have followed this approach and for the first time

programmed a robot to act based on an ethical principle.

Allegro CL's Role

"Regarding why Allegro CL", states Michael Anderson,

"We are developing a general ethical dilemma analyzer (GenEth) for

which the work done with Nao was preliminary. In GenEth, general

ethical principles are abstracted from particular ethical dilemmas.

Minimal assumptions are made as to not overly bias the learning

mechanism; this includes a minimal commitment to the representation

scheme used to represent principles. As cases are presented to the

system, the representation is learned along with the principle. ACL's

ability to handle such dynamic representations makes it ideal for this

aspect of our work. Further, as the learning process is ongoing,

ACL's built-in persistence facilitates this."

"Regarding why Allegro CL", states Michael Anderson,

"We are developing a general ethical dilemma analyzer (GenEth) for

which the work done with Nao was preliminary. In GenEth, general

ethical principles are abstracted from particular ethical dilemmas.

Minimal assumptions are made as to not overly bias the learning

mechanism; this includes a minimal commitment to the representation

scheme used to represent principles. As cases are presented to the

system, the representation is learned along with the principle. ACL's

ability to handle such dynamic representations makes it ideal for this

aspect of our work. Further, as the learning process is ongoing,

ACL's built-in persistence facilitates this."

Shaping the future

The study of machine ethics is only at its

beginnings. Though preliminary, our results give us hope that ethical

principles discovered by a machine can be used to guide the behavior

of robots, making their behavior toward humans more

acceptable. Instilling ethical principles into robots is significant

because if people were to suspect that intelligent robots could behave

unethically, they could come to reject autonomous robots

altogether. The future of AI itself could be at stake.

The study of machine ethics is only at its

beginnings. Though preliminary, our results give us hope that ethical

principles discovered by a machine can be used to guide the behavior

of robots, making their behavior toward humans more

acceptable. Instilling ethical principles into robots is significant

because if people were to suspect that intelligent robots could behave

unethically, they could come to reject autonomous robots

altogether. The future of AI itself could be at stake.

Interestingly, machine ethics could end up influencing the study of ethics. The “real world” perspective of AI research could get closer to capturing what counts as ethical behavior in people than does the abstract theorizing of academic ethicists. And properly trained machines might even behave more ethically than many human beings would, because they would be capable of making impartial decisions, something humans are not always very good at. Perhaps interacting with an ethical robot might someday even inspire us to behave more ethically ourselves.

Click here to watch the video, The Ethical Robot

Click here to read the Scientific American article, "Robot Be Good: A Call for Ethical Autonomous Machines"

To read their full Academic Research paper, please visit ETHEL: Toward a Principled Ethical Eldercare Robot

Also, another application description from the same authors, GenEth - A General Ethical Dilemma Analyzer

| Copyright © Franz Inc., All Rights Reserved | Privacy Statement |

|

|

|

|

|